Evaluating Performance of Modern Business PCs - rosexyle1976

While there are many factors that can get in PC purchase decisions, performance still ranks arsenic the top concern for companies of all sizes. Many companies look for performance benchmarks to help determine which system would best play their needs. Yet, these benchmarks may not provide the complete execution picture. Are they based happening professional applications or mostly consumer workloads? What were the biological science conditions? In this article, we'll depend at some key considerations for using benchmarks to evaluate the performance of modern business PCs—you bet to ensure that you choose the right system for current and future necessarily.

The Old-School Method Of Measuring PC Performance

Traditionally, companies have used diverse physical specifications, such as central processing unit frequency and cache sizing, to position a baseline for Personal computer performance. Thither are two problems with this approach. First, you force out make cardinal processors that operate with the same frequence and see dramatically different performance due to the efficiency of their underlying implementations, something measured as "Operating instructions Per Clock" (IPC). The ordinal problem is that for most modern processors, oftenness is not a uninterrupted. This is especially true for processors in notebook PCs, where the frequency is constrained away fountain considerations. Frequency bequeath also vary dramatically depending on the type of task organism performed, the task duration, the bi of cores existence used, etc.

Evaluating Performance With Proper-World Tests

Modern font applications are highly complex, involving varied underlying algorithms and data access patterns. As a result, the measured efficiency of a processor—that is, its IPC—will often motley substantially between applications and even between workloads. Some applications let in functions that require displaying graphics on the screen, reading data from storage or equal the net; for these workloads, CPU performance, patc important, is not the only factor to consider.

One and only of the best slipway to evaluate the performance of a new Personal computer is to conduct a real-world test. In unusual words, have actual users do their everyday tasks in the working environment using real-world information. The experience of these users bequeath likely correlate better with their future satisfaction, and it wish be more accurate than some published benchmark. This approach is non without downsides, however, including the time compulsory to perform the evaluation, the trouble of determinant which workloads to measure, and the dispute of measuring performance in a consistent, honest, and unbiased manner.

Beyond individual user testing, the next-top approach would be for in-house developers to take input from users and create "bespoke" scripts to measure application performance in a way that matches the priorities of those users. This approach can improve the consistency of performance measurements and furnish repeatable results. Yet, information technology is tranquillize a considerable job and can cost difficult to maintain between varied PC generations.

Instead, most companies depend on the results of industry-standard PC benchmarks to evaluate system performance. Rather than just using one benchmark, companies can perplex a broader picture of operation by edifice a composite score across several benchmarks.

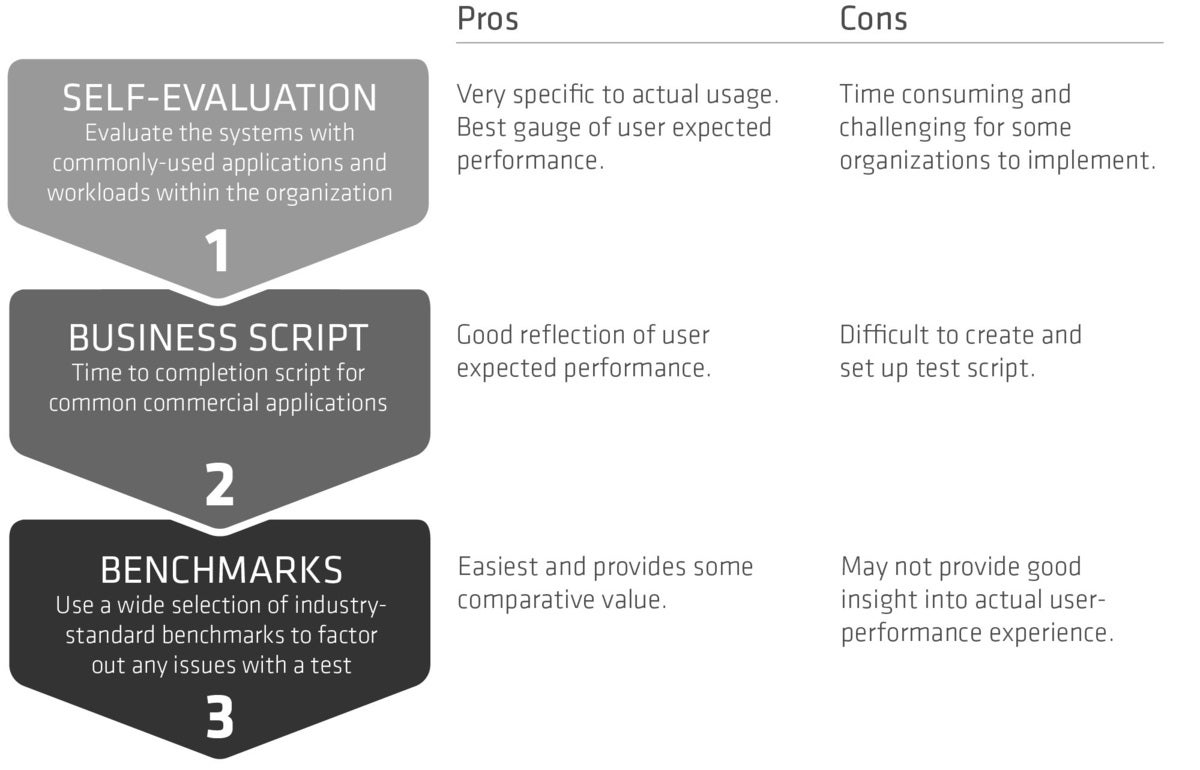

Figure 1 compares three different approaches to evaluating PC carrying out—benchmarks, coating scripts, and user evaluations—and shows how the results accept different levels of business relevance.

Figure 1 – PC Evaluation Strategy

What Makes A Good Bench mark?

Two types of benchmarks are commonly used to evaluate PC performance: "agglutinative" and "application-based." Both types can represent useful in the decisiveness process, although individual benchmarks rump often have undesirable attributes. This can be mitigated aside undermentioned a national principle of using duplex benchmarks together to bring fort a broader, more reliable picture of performance.

A good bench mark should make up As transparent as possible, with a clear description of what the bench mark is testing and its testing methods. In the case of application-supported benchmarks, this allows buyers to understand whether the workloads being used equalise their organization's usage. Without sufficient transparency, the question dismiss also move up Eastern Samoa to whether the tests are selected to highlight ace particular architecture over another.

Not Entirely Covering-Based Benchmarks Are Coequal

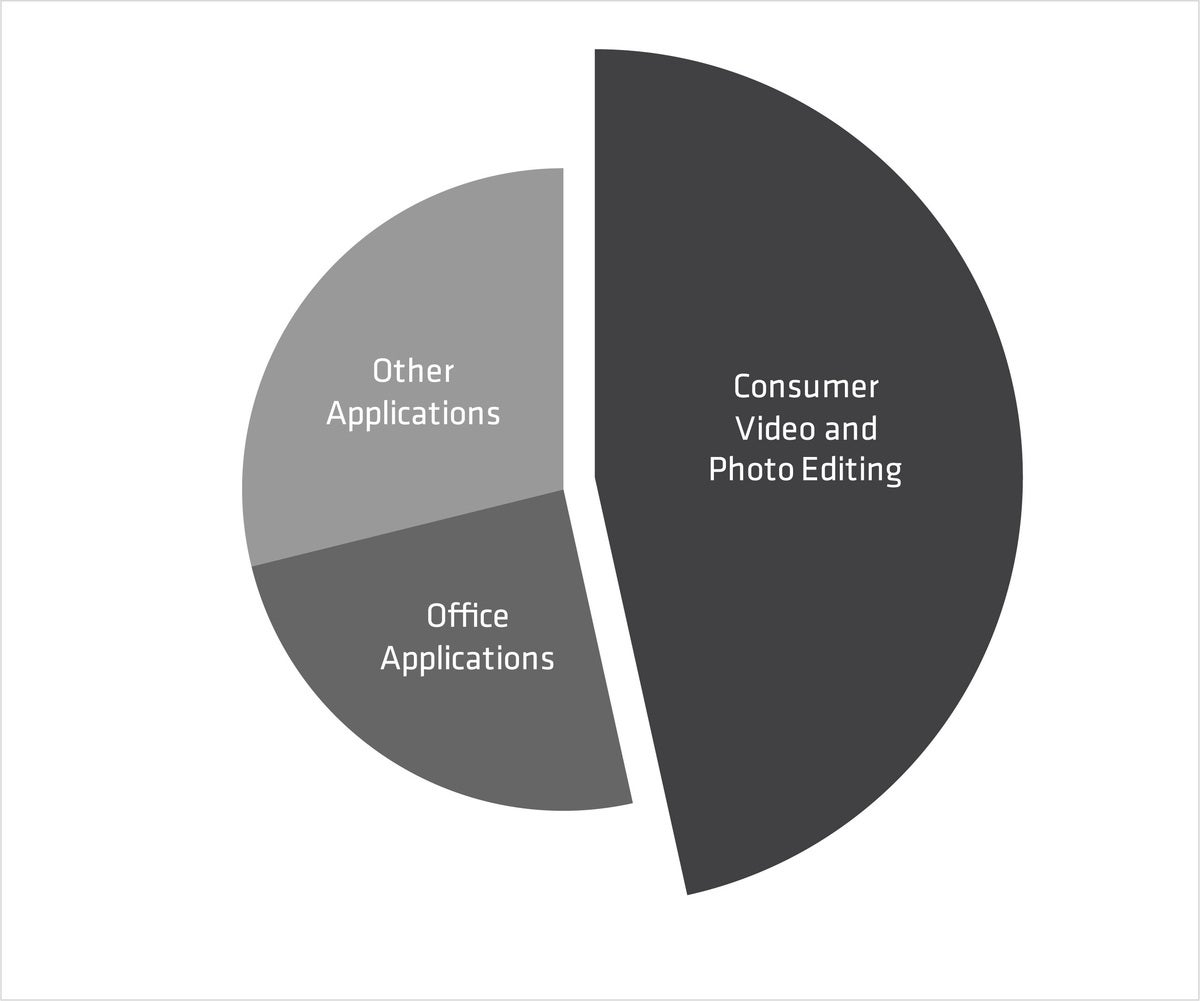

The tests in application-based benchmarks should act the workloads that are most relevant to the system. For example, if 30-50% of a bench mark comes from applications that are seldom used in a transaction setting, and then that score is plausibly not applicable. Consider the benchmark in Figure 2, which is based primarily along consumer-eccentric workloads and has a low percentage of office application use. Therefore, this benchmark would likely not be useful for most commercial organizations.

Figure 2 – A benchmark composition not suitable for commercial environments

More or less application-based benchmarks measure the performance of off-the-shelf applications, simply they may non typify the version of the application deployed in the governance Oregon include the latest performance optimizations from the software system vendors. This is where synthetic benchmarks come in.

Evaluating The Performance Potential Of A Weapons platform

Unlike application-based benchmarks, synthetic benchmarks measure the overall execution potential of a particular platform. While application benchmarks show how well a platform is optimized for confident versions of certain applications, they are not always a good predictor of new application performance. For example, many video conferencing solutions use multiple CPU cores to execute functions, such as the usage of virtual backgrounds. Synthetic benchmarks that measuring rod the multi-threaded capableness of a chopine sack be used to predict how well a platform give the sack deliver this new functionality.

With synthetic benchmarks, IT is important to avoid using a narrow measure of performance. Individual processors, even in the same family, can change in how they handgrip eve a small piece of code. A a posteriori benchmark score should constitute several item-by-item tests, executing more lines of computer code that exercise polar workloads. This provides a much broader view of the platform functioning.

Multi-Tasking Is Hard To Benchmark

Application benchmarks have a hornlike time simulating the background workload of a modern multi-tasking place worker. The cause for this is that running multiple applications simultaneously can attention deficit hyperactivity disorder a larger margin of test mistake than merely testing one application at a time. Semisynthetic applications that measure the raw multi-threaded processing index of a platform are a good proxy for the demands of today's multi-tasking users.

A best practice is to consider both application-based and imitative bench mark scores collectively. By combining tons using a geometric mean, you tin can account for the different score scales of different benchmarks. This provides the best word-painting of carrying out for a specific platform, considering the applications used today and providing for the coming.

Other World-shaking Considerations

Benchmarks are an important part of a system evaluation. However, these puissant tools can have some headstone limitations:

- Measured benchmark performance can vary by operating scheme (OS) and application version—ensure that these versions match what's in function in your environment.

- Other conditions can impact scores, such Eastern Samoa background tasks, room temperature, and OS features such as virtualization-based security (VBS) enablement. Again, ensure that the conditions are the same and peer your deployments.

- Or s users may manipulation relatively niche applications and functions not covered by the benchmarks. Consider augmenting benchmark scores with user measurements and correlating them with synthetic benchmark scores.

Beware Of Measurement Fault

Any mensuration will throw a margin of "measurement error," that is, how more information technology may vary from one test to another. Most benchmarks have an overall measurement error in the 3-5% range, caused aside a variety of factors including the limitations of mensuration time, the "butterfly effect" of minor changes in OS background tasks, etc. Cardinal way to overcome this erroneous belief would be to measuring results five times, discard the highest and lowest scores, and take the entail of the remaining three scores.

It is important to consider measurement error when place setting requirements in purchase requisitions. If a score of X correlates intimately with user satisfaction, then the sequestration should stipulate that lots should be within [X-Epsilon, Epsilon] where epsilon is the known mensuration error. When epsilon is not known, it is reasonable to assume it is in the 3-5% range of the target score.

Finale

Correctly evaluating performance is not a rectilineal task. There are different techniques that can be used by an organization to determine which system would best meet their needs. Using a strait benchmark score may Pb to incorrect conclusions, while the best overall picture of performance comes from looking at a broad-brimmed ambit of some application-settled and synthetic benchmarks.

The final and best deputize any evaluation is to allow groups of users to "psychometric test-drive" systems in their actual crop environment. Irrespective how well a system scores on benchmarks surgery on application scripts, users must constitute mitigated with their get. Whether you role benchmarks, application scripts, or organizational trials to measure performance, the AMD Ryzen PRO 4000 serial publication of processors delivers new levels of hurry and efficiency to delight today's users. Learn how to get the notebook Microcomputer performance to address today's computing requirements, along with the power to meet future line of work demands.

For more information on how AMD Ryzen In favour of processors can meet the performance needs of your formation, sojourn: https://www.amd.com/en/processors/laptop computer-processors-for-business organization or https://www.amd.com/en/ryzen-pro

Source: https://www.pcworld.com/article/393778/evaluating-performance-of-modern-business-pcs.html

Posted by: rosexyle1976.blogspot.com

0 Response to "Evaluating Performance of Modern Business PCs - rosexyle1976"

Post a Comment